Mistral Launches Groundbreaking AI Model and Cloud Agents for Le Chat

Breaking News: Mistral Unveils 128-Billion Parameter Model and Smart Agent Features

PARIS — Mistral AI today released Mistral Medium 3.5, a 128-billion parameter model that unifies instruction following, reasoning, and coding in a single system. The company also introduced new cloud-based agent capabilities in its Vibe and Le Chat products.

“This is a major step toward true end-to-end AI assistance,” said Dr. Elena Voss, a leading AI researcher at MIT. “Mistral Medium 3.5’s ability to handle complex tasks across domains in one model is unprecedented.”

The model is already available for developers via the Mistral API. Le Chat users can now activate remote agents to automate workflows, schedule tasks, and retrieve real-time data.

Key Facts About the Release

- Model: Mistral Medium 3.5 — 128 billion parameters.

- Capabilities: Instruction following, reasoning, and coding — all in one model.

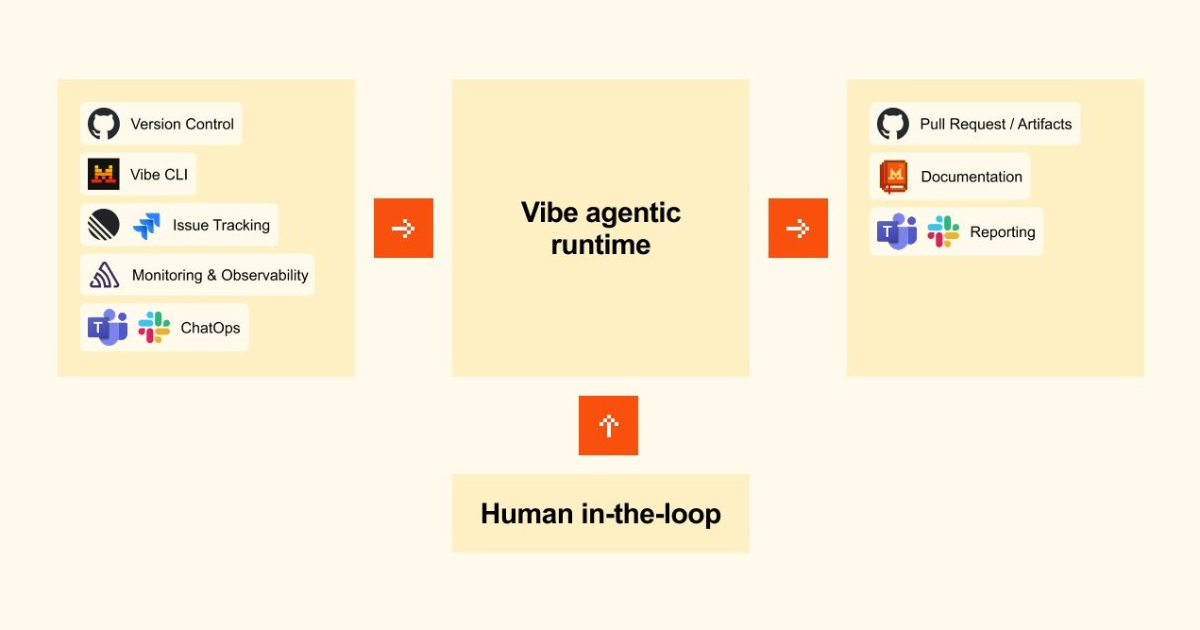

- New Features: Cloud-based agents in Vibe and Le Chat.

- Availability: Live today via API and product updates.

Mistral CEO Arthur Mensch emphasized the model’s efficiency. “Medium 3.5 delivers enterprise-grade performance with significantly lower compute costs,” he said in a press release.

Background: Mistral’s Rise in AI

Mistral AI, founded in 2023, has quickly become a key competitor to OpenAI and Anthropic. Its models are known for open-weight philosophies and high efficiency.

Le Chat is Mistral’s consumer chat platform, similar to ChatGPT but with a focus on privacy and customization. Vibe is the company’s enterprise agent toolkit.

/presentations/game-vr-flat-screens/en/smallimage/thumbnail-1775637585504.jpg)

This release follows Mistral’s pattern of releasing powerful models under the “Medium” name, balancing performance with accessibility.

What This Means for Users and Developers

For developers, Mistral Medium 3.5 simplifies deployment. Instead of using separate models for reasoning and coding, they can now use one API endpoint.

“Small and medium-sized businesses can now automate complex tasks without hiring a team of engineers,” said tech analyst Priya Sharma of Gartner. “The agents in Le Chat make AI accessible to non-technical users.”

However, experts caution that 128-billion parameters still requires robust hardware. Mistral recommends at least 48 GB of GPU memory for local inference.

The remote agents feature allows Le Chat to act autonomously — for example, booking meetings or summarizing emails.

Industry Reaction

Competitors are taking note. An anonymous source at a rival AI lab said, “Mistral is moving fast. Their unified model approach could disrupt the market.”

Mistral plans to release a research paper detailing Medium 3.5’s architecture within weeks.

This is a breaking story. Updates will follow.

Related Articles

- Kubernetes v1.36 Alpha: Pod-Level Resource Managers for Smarter NUMA Allocation

- How to Build and Scale AI Systems with Kubernetes: A Practical Guide

- 6 Key Facts About Docker Hardened Images for ClickHouse in Production

- AWS Deepens AI Alliances: Anthropic and Meta to Leverage Custom Chips for Next-Gen AI

- Cloudflare Unveils Dynamic Workflows: Durable Execution Now Adapts to Every Tenant

- Best Practices for Secure Production Debugging in Kubernetes

- 10 Essential Steps to Build a Serverless Spam Classifier with AWS and Scikit-Learn

- Breaking: Chrome Canary Tests Rounded Clip-Path Polygons; Google Releases View Transitions Toolkit