NVIDIA's Nemotron 3 Nano Omni Model Unifies Multimodal AI with 9x Efficiency Leap

Breaking: NVIDIA Unveils Open Multimodal Nemotron 3 Nano Omni – Unifying Vision, Audio, and Text for AI Agents

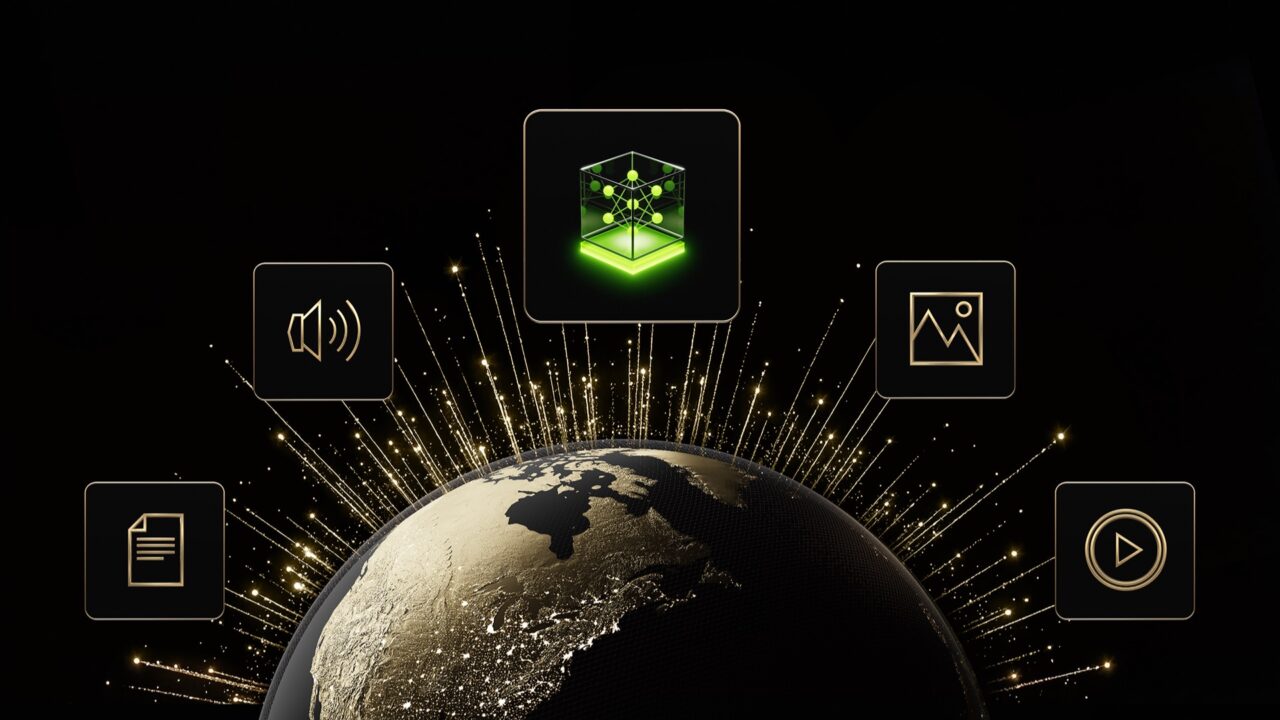

NVIDIA today announced the launch of Nemotron 3 Nano Omni, an open multimodal model that integrates vision, audio, and language processing into a single system. The model delivers up to 9x higher throughput than existing open omni models, enabling faster and more accurate AI agents for enterprises and developers.

Unlike current agent systems that rely on separate models for each modality—leading to latency, context fragmentation, and increased costs—Nemotron 3 Nano Omni combines all sensory inputs into one streamlined pipeline. This allows agents to process video, audio, images, and text simultaneously with advanced reasoning.

Key Details at a Glance

- What it is: An open, omni-modal reasoning model with leading efficiency and accuracy.

- What it handles: Text, images, audio, video, documents, charts, and graphical interfaces as input; text as output.

- Who it’s for: Enterprises and developers building fast, reliable agentic systems requiring a multimodal perception sub-agent.

- How it works: Acts as the “eyes and ears” in a system of agents, complementing models like Nemotron 3 Super and Ultra or proprietary models.

- Why it matters: Leading multimodal accuracy with 9x higher throughput than other open omni models, reducing cost and improving scalability without sacrificing responsiveness.

- Architecture: 30B-A3B hybrid MoE with Conv3D, EVS, and 256K context.

- Availability: April 28, 2026, via Hugging Face, OpenRouter, build.nvidia.com, and 25+ partner platforms.

Background: The Multimodal Bottleneck

AI agent systems today typically employ separate models for vision, speech, and language. Each pass through a different model increases latency, and context is lost as data moves between them. This fragmented approach also raises costs and introduces inaccuracies over time.

Nemotron 3 Nano Omni eliminates these issues by unifying all modalities within a single model. Its hybrid Mixture-of-Experts (MoE) architecture with 30 billion total parameters (3 billion active) and 256K context window allows it to handle complex document intelligence, video and audio understanding—topping six leaderboards in these domains.

Early Adopters and Evaluators

AI and software companies already adopting Nemotron 3 Nano Omni include Aible, Applied Scientific Intelligence (ASI), Eka Care, Foxconn, H Company, Palantir, and Pyler. Dell Technologies, Docusign, Infosys, K-Dense, Lila, Oracle, and Zefr are evaluating the model.

“To build useful agents, you can’t wait seconds for a model to interpret a screen,” said Gautier Cloix, CEO of H Company. “By building on Nemotron 3 Nano Omni, our agents can rapidly interpret full HD screen recordings — something that wasn’t practical before. This isn’t just a speed boost: It’s a fundamental shift in how our agents perceive and interact with digital environments in real time.”

What This Means for AI Agents and Enterprises

The unification of vision, audio, and language in a single model means agents can now process multimodal data in real time without the overhead of coordinating separate systems. For customer support agents, this translates to instantly analyzing screen recordings, call audio, and data logs within one inference pass.

For finance, the ability to parse PDFs, spreadsheets, charts, and voice notes simultaneously streamlines complex workflows. The 9x throughput improvement directly lowers operational costs while maintaining high accuracy, making multimodal AI more accessible for production deployments.

Nemotron 3 Nano Omni also offers full deployment flexibility—enterprises can run the model on-premises, in the cloud, or at the edge, giving them control over data privacy and latency. This positions it as a foundational building block for next-generation agentic systems that must perceive and reason across multiple channels.

Availability and Next Steps

The model will be available open-source starting April 28, 2026, through Hugging Face, OpenRouter, and NVIDIA's build.nvidia.com platform, plus over 25 partner platforms. Developers can integrate it immediately to build faster, leaner multimodal agents.

For more details, see the Background section or the Adoption section above.

Related Articles

- 5 Key Changes to Secure Your SSH Access Against Quantum Threats on GitHub

- How to Enjoy 'Breaking the Code' at Central Square Theater: A Step-by-Step Guide to Experiencing Alan Turing's Story

- Mastering Go Fix: A Complete Guide to Automating Code Modernization

- Navigating the Slow Evolution of Programming: A Guide to Recognizing Legacy Patterns and Embracing Rapid Change

- 6 Essential Ways to Govern AI Agent Tool Calls in .NET with the Agent Governance Toolkit

- 10 Things You Need to Know About Cloudflare Giving AI Agents the Keys to the Cloud

- Understanding the New Python Packaging Council: A Complete Guide

- Mastering Autonomous AI Agents: A Security-Focused Guide to OpenClaw