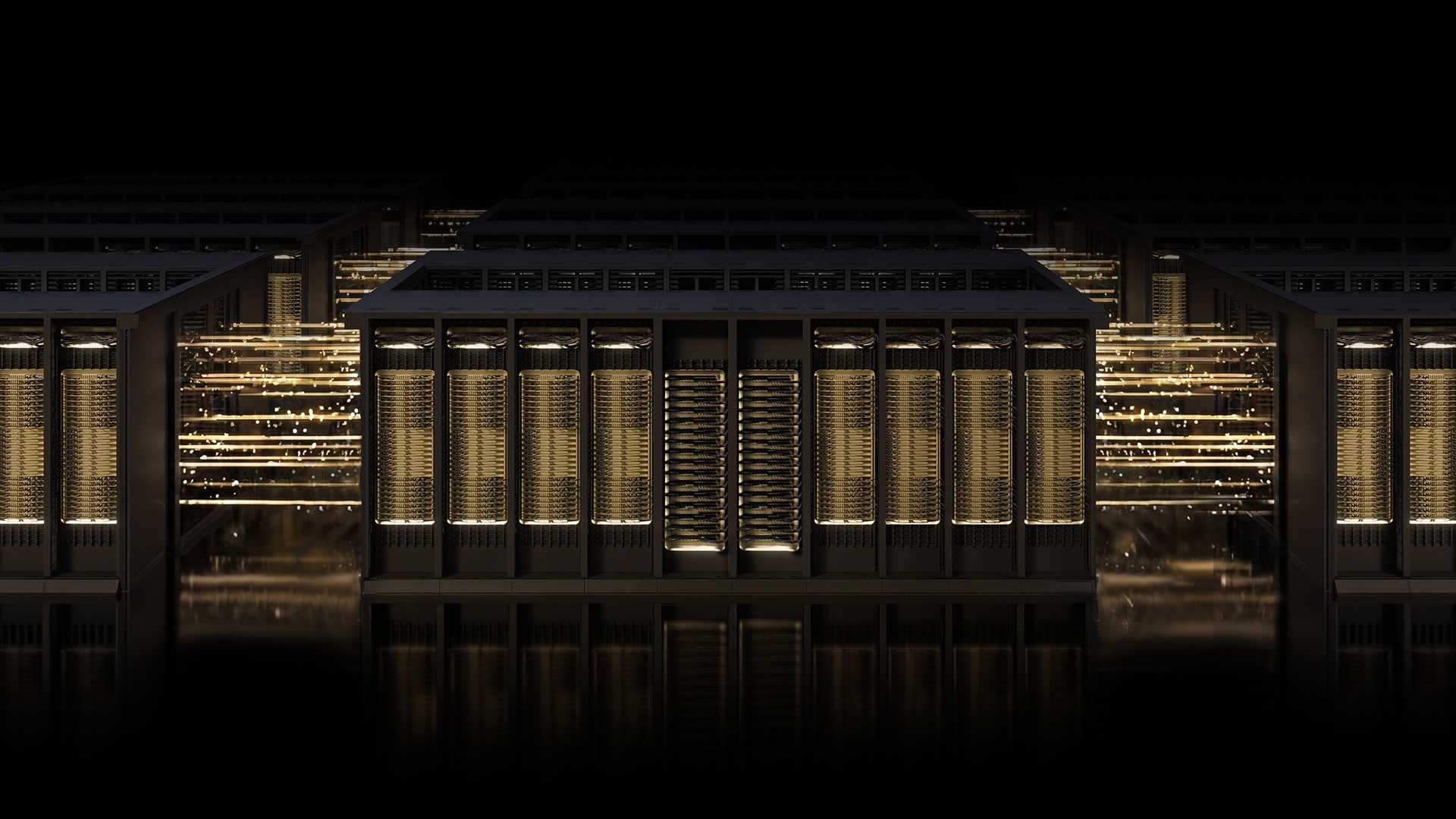

NVIDIA Spectrum-X with MRC Sets New Benchmark for Gigascale AI Networking

Breaking News — NVIDIA has announced that its Spectrum-X Ethernet fabric, now featuring the Multipath Reliable Connection (MRC) protocol, has been deployed at scale by OpenAI, Microsoft, and Oracle, setting a new industry standard for AI networking. The open specification for MRC was released today through the Open Compute Project, marking a pivotal shift in how massive AI training clusters handle data traffic.

MRC enables a single RDMA connection to spread data across multiple network paths simultaneously, dramatically improving throughput, load balancing, and fault tolerance. This technology has been proven first in production on Spectrum-X hardware and is now available for broader adoption.

“Deploying MRC in the Blackwell generation was very successful and was made possible by a strong collaboration with NVIDIA,” said Sachin Katti, head of industrial compute at OpenAI. “MRC’s end-to-end approach enabled us to avoid much of the typical network-related slowdowns and interruptions and maintain the efficiency of frontier training runs at scale.”

Background

The race to build the world’s most powerful AI factories demands networking that can keep pace with rapidly growing model sizes. Traditional Ethernet often struggles with congestion, packet loss, and underutilized links during long training runs, leading to expensive GPU idle time.

NVIDIA’s Spectrum-X platform addresses these challenges with purpose-built hardware, deep telemetry, and intelligent fabric control. MRC, an RDMA transport protocol developed in collaboration with Microsoft and OpenAI, distributes traffic across all available paths, dynamically rerouting around slowdowns — much like a smart traffic app guiding cars through a city grid.

Microsoft’s Fairwater data center and Oracle Cloud Infrastructure’s Abilene facility are among the first large-scale AI factories to rely on MRC for training leading-edge frontier LLMs. These deployments validate the technology’s ability to deliver high GPU utilization and sustained bandwidth even under heavy congestion.

What This Means

With MRC now an open standard, the entire AI industry gains access to a proven method for building gigascale training fabrics without proprietary lock-in. Data center operators can expect fewer network-related slowdowns, higher GPU efficiency, and simpler troubleshooting.

For AI researchers and enterprises, this translates to faster model training cycles, lower costs, and the ability to scale to trillions of parameters. The technology also enables fine-grained visibility into traffic patterns, allowing administrators to optimize performance in real time.

As NVIDIA, Microsoft, and OpenAI continue to push the boundaries of AI, MRC sets a new expectation for network reliability — one where every GPU gets the bandwidth it needs, when it needs it.

Related Articles

- Unlock Your Home Network's Potential with a $15 Raspberry Pi

- Why Skipping Motorola's Latest Razr for Last Year's Model Makes Sense

- 10 Things You Need to Know About the Smartphone Price Hikes Hit OnePlus, Nothing, and More

- Motorola's 2026 Razr Lineup: Incremental Updates, Higher Prices – What You Need to Know

- The Art of Concealing Bluetooth Trackers in Postal Mail: A Technical Guide

- 5 Reasons Why the 2026 Motorola Razr Isn’t Worth Your Money (and Last Year’s Model Is a Steal)

- Enhancing Man Pages with Practical Examples: A Look at tcpdump and dig

- Mac Mini Price Hike: What You Need to Know About Apple's $200 Increase