10 Surprising Facts About the $200 Modded Nvidia V100 AI GPU That Beats Modern Midrange Cards

When you think of high-performance AI hardware, the Nvidia Tesla V100 might not be the first card that comes to mind—especially in an era dominated by RTX 4090s and data-center H100s. But a recent DIY project proves this aging workhorse still has serious punch. A YouTuber snagged a Tesla V100 SMX variant for just $100, spent another $100 on a custom adapter and 3D-printed cooling, and turned it into a fully functional PCIe card. The result? Surprisingly competitive AI inference and NVR benchmarks that rival many modern midrange GPUs. Here are the ten things you need to know about this ingenious hack and why it matters for budget-minded AI enthusiasts.

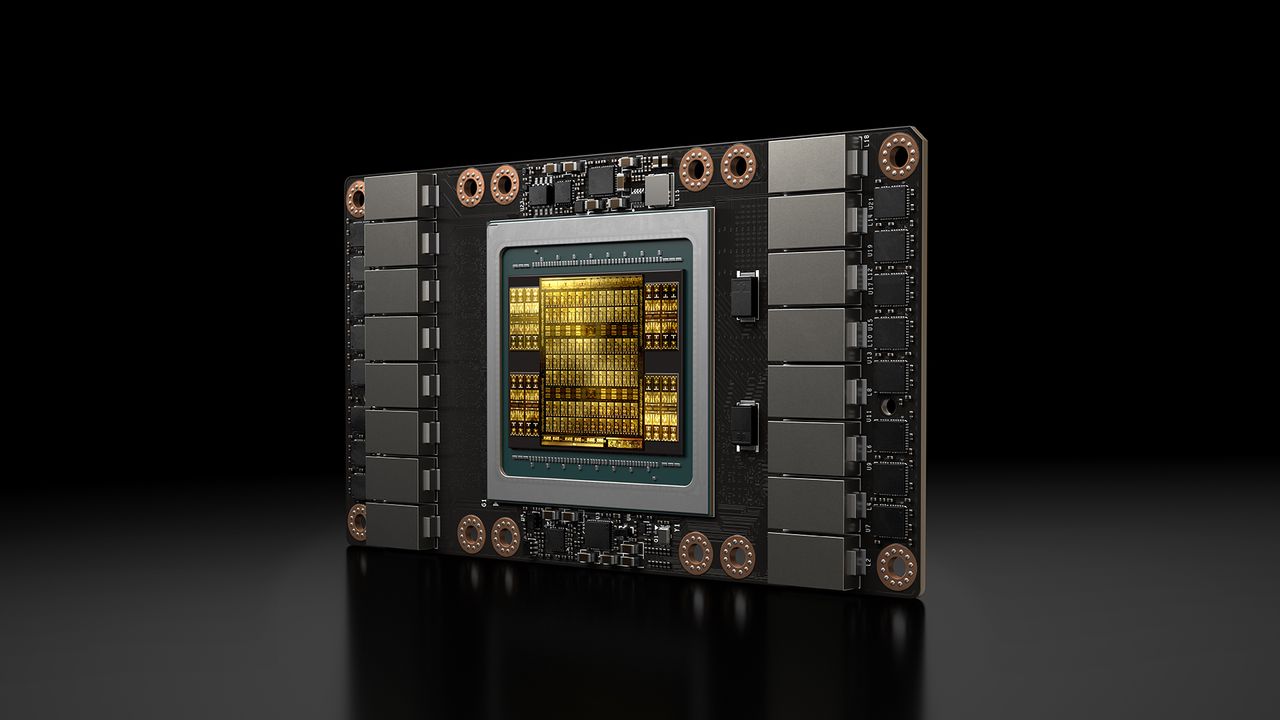

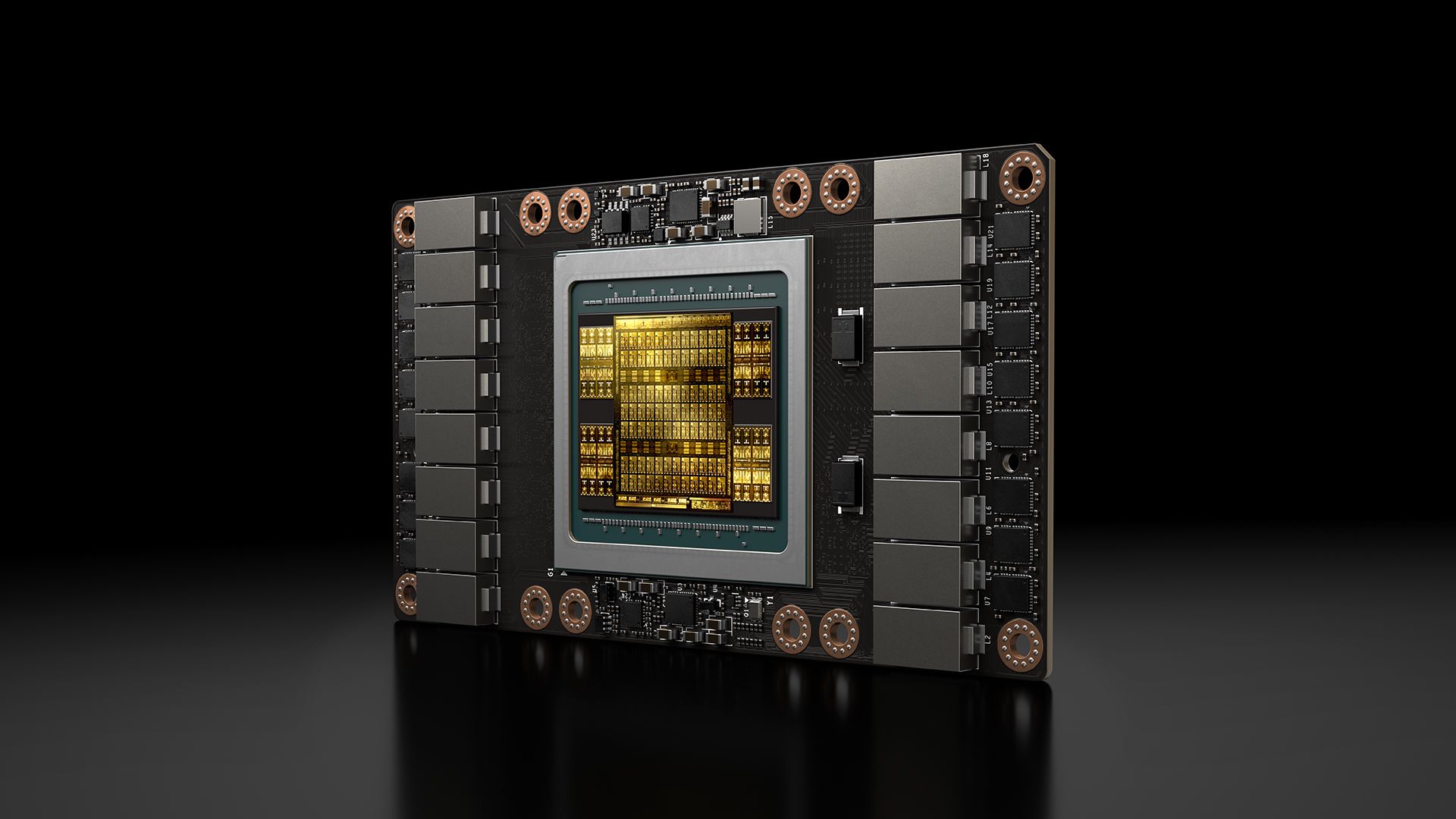

1. The Tesla V100 SMX Is a Data Center Relic—But a Powerful One

Originally launched in 2017, the Tesla V100 SMX is a server-grade GPU based on the Volta architecture. It packs 5120 CUDA cores, 640 Tensor Cores, and 16GB of HBM2 memory. This card was designed for high-performance computing and deep learning, often found in server racks costing tens of thousands. Today, used units are flooding the secondary market as data centers upgrade to newer architectures. The YouTuber found his SMX variant for just $100, a fraction of its original price, proving that older enterprise hardware can become a budget goldmine for AI tinkerers.

2. The SMX Version Is Physically Different from Standard V100s

Unlike the standard PCIe-based V100, the SMX variant uses a proprietary 'socketed' connector designed for Nvidia's DGX systems and Supermicro servers. It lacks a traditional PCIe edge connector and relies on a custom backplane. This makes it unusable in a regular PC without modification. The YouTuber had to design a custom PCB adapter that converts the SMX socket to a PCIe x16 interface, plus 3D-print a cooling duct to fit standard fans. The total hardware cost: around $200, including the GPU and adapter.

3. The Custom PCB Adapter Is the Secret Sauce

The key to this mod is a specially designed PCB that mates the SMX connector to a standard PCIe slot. This isn't off-the-shelf; the YouTuber likely reverse-engineered the pinout and fabricated a small run of boards. The adapter also includes power regulation, as the SMX card draws much more power (around 250W) than a typical PCIe slot can supply. The result is a plug-and-play solution (with external power) that makes the V100 SMX behave like a regular GPU in any motherboard. This opens up possibilities for others to replicate the build.

4. 3D-Printed Cooling Is Surprisingly Effective

Data center GPUs often rely on chassis-wide airflow, so the SMX variant lacks a standard fan. To run it in a desktop environment, the YouTuber designed a custom 3D-printed shroud that directs two 120mm fans over the heatsink. This keeps temperatures well within safe limits even under sustained AI workloads. The project shows that with some CAD skills and a 3D printer, you can overcome the biggest obstacle of repurposing server GPUs: keeping them cool.

5. AI Inference Performance Rivals Modern Midrange Cards

In head-to-head benchmarks, the modded V100 SMX held its own against GPUs like the RTX 3060 and even the RTX 4060 in inference tasks. For large language models (LLMs) running on frameworks like llama.cpp or TensorRT, the V100's mature software stack and Tensor Cores deliver competitive token-per-second rates. The 16GB of HBM2 memory also helps fit larger models without quantization, unlike many 12GB and 8GB midrange cards. This makes the $200 build a surprisingly viable option for running local AI models.

6. Efficiency Is a Surprise Win for the Older Chip

Despite being manufactured on a 12nm process (compared to modern 5nm or 8nm), the V100 SMX delivers impressive performance per watt in inference workloads. The YouTuber's tests showed it drawing around 200W under load, while achieving higher throughput than an RTX 4060 in certain LLM benchmarks. For tasks like batch inference or serving models, the V100's design optimized for dense math makes it more efficient than many consumer cards. This challenges the notion that newer always means better.

7. NVR and Video Analytics Are Another Strong Use Case

Beyond AI, the V100 SMX shines in NVR (network video recorder) analytics. The GPU's hardware decoders and Tensor Cores accelerate video processing, object detection, and facial recognition. For home or small business security setups, this modded card can handle dozens of streams with real-time AI overlay—a task that would stress a midrange gaming GPU. The $200 price tag makes it an attractive alternative to dedicated AI DVRs.

8. Software Compatibility Is Broad, but Not Perfect

Because the V100 is a compute-focused card, it lacks display outputs. That's fine for headless AI servers, but you'll need a separate GPU for video output. The card is fully supported by NVIDIA drivers (including CUDA and TensorRT) on Linux and Windows. However, some consumer applications like gaming are not optimized for its server drivers. For AI inference and video analytics, though, compatibility is excellent. The mod also requires a motherboard with PCIe bifurcation support or a second GPU for initial setup.

9. The Biggest Limitation: Only 16GB VRAM

While 16GB of HBM2 is ample for many models, it's a ceiling for the largest LLMs or multi-model deployments. Models like Llama-3-70B require more VRAM even with quantization. The V100 cannot be upgraded, and its memory bandwidth (900GB/s) is lower than modern H100s. For budget AI enthusiasts, however, it handles 7B to 13B parameter models comfortably. Future projects might explore linking multiple modded V100s via NVLink (if the adapter supports it), but that would increase complexity and cost.

10. This Mod Opens a Path for Sustainable AI Hardware

The V100 SMX hack is more than a cool project—it's a proof of concept for reusing enterprise e-waste. Instead of buying a new $300+ midrange GPU, enthusiasts can rescue data center GPUs from landfills and give them a second life in budget AI rigs. The total cost of $200 is hard to beat for the performance offered. As AI goes mainstream, projects like this democratize access to high-performance inference, letting hobbyists, students, and startups run local models without breaking the bank.

In conclusion, the modded Nvidia V100 SMX proves that older hardware, when paired with ingenuity, can still compete in modern AI workloads. For $200, you get a GPU that matches or exceeds many midrange cards in inference efficiency, NVR analytics, and LLM performance. While it's not for everyone—requiring DIY skills and a tolerance for tinkering—it's a compelling option for those on a budget. As the community builds on this idea, we may see more 'Franken-GPUs' emerge, pushing the boundaries of what's possible with repurposed technology.

Related Articles

- How to Boost Your Framework Laptop 16 with an External GPU via OCuLink

- Huawei's AI Chip Ambitions: $12 Billion Revenue on the Horizon as Domestic Demand Surges

- Linux Kernel 7.1 Begins Removal of 1990s AMD Elan SoC Drivers

- How Apple Could Diversify Its Chip Supply Chain: Evaluating Samsung and Intel as Alternatives to TSMC

- Why I Stopped Disabling This Hidden Windows Performance Booster

- Breaking: Massive Discounts on Samsung Galaxy Tab S11 Ultra, Galaxy S26 Ultra, Galaxy Book6, and Amazon Echo Devices

- Intel's Unified Chip Strategy Shines at Computex 2026: A Decade in the Making

- Zyphra's TSP: A Smarter Way to Parallelize Large Language Models