How Meta’s Adaptive Ranking Model Revolutionizes Ad Serving at Scale

Meta's new Adaptive Ranking Model solves the inference trilemma for LLM-scale ad models, using intelligent routing to balance complexity, latency, and cost, yielding +3% conversions and +5% CTR.

Introduction

Meta has long been at the forefront of AI-powered recommendation systems, continuously pushing boundaries to enhance user experiences and advertiser outcomes. Now, the company is taking a bold step forward by scaling its ad recommendation runtime models to the size and complexity of large language models (LLMs). This ambitious move aims to achieve a deeper, more nuanced understanding of people’s interests and intentions. However, such scaling introduces a critical challenge: balancing massive model complexity with the real-time, low-latency demands of a global platform serving billions.

To address this, Meta developed the Meta Adaptive Ranking Model—a system that effectively “bends” the inference scaling curve, delivering high return on investment (ROI) and industry-leading efficiency. By abandoning the traditional one-size-fits-all inference approach, the model dynamically adjusts its complexity based on each user’s context and intent, ensuring every request is handled by the most effective yet efficient model. This innovation allows Meta Ads to maintain strict sub-second latency while providing a high-quality experience for every person.

The Inference Trilemma

At the heart of Meta’s challenge lies what they call the “inference trilemma.” As models grow larger and more complex, they demand more compute and memory. Yet the service must also meet low latency (sub-second responses) and cost efficiency to operate at global scale. Balancing these three forces—complexity, speed, and cost—is a fundamental tension in AI serving. The Adaptive Ranking Model was designed to resolve this trilemma head-on.

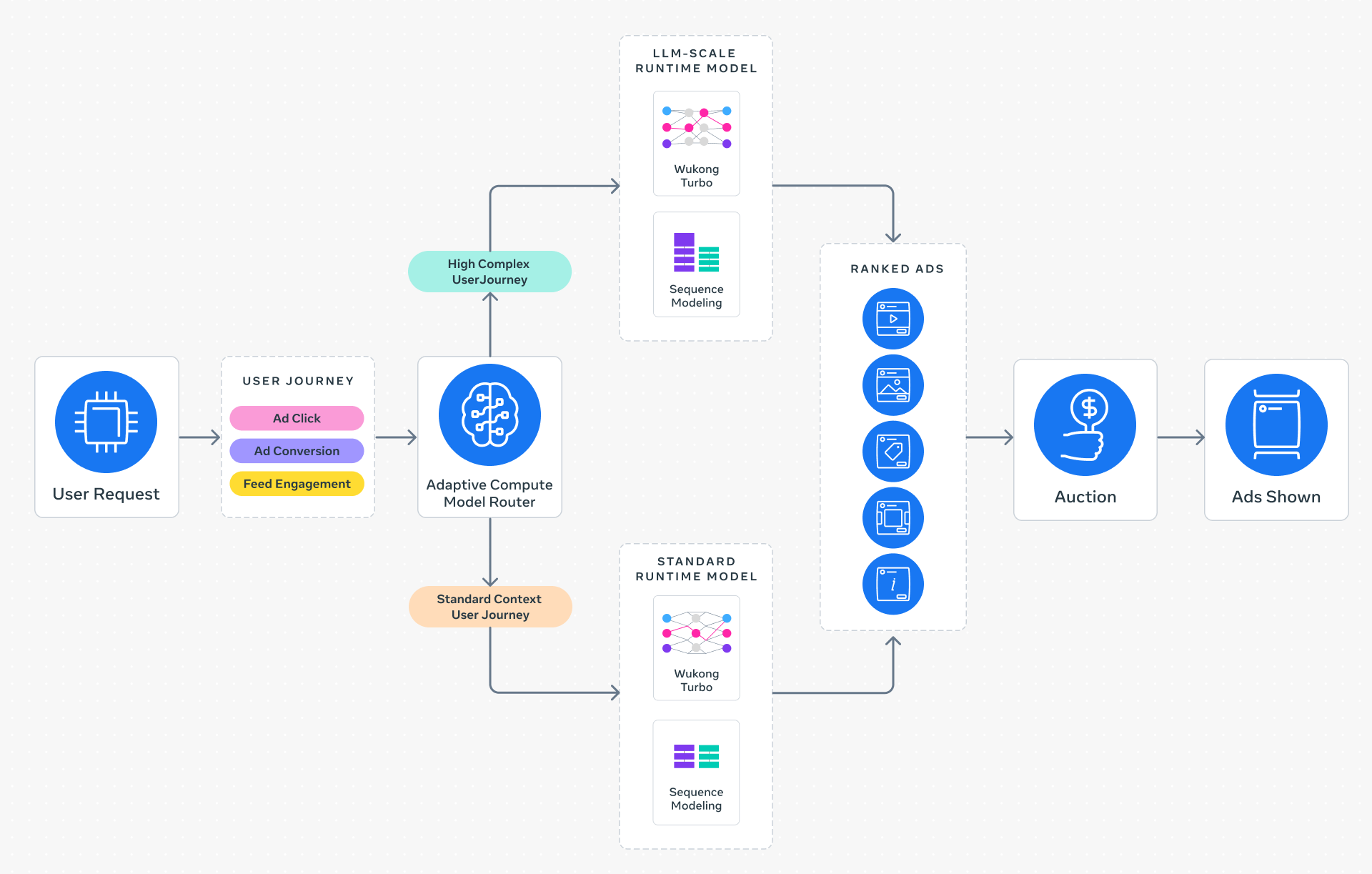

How the Adaptive Ranking Model Works

Instead of routing every ad request through a single, massive model, the Adaptive Ranking Model uses intelligent request routing. It assesses the user’s current context and inferred intent, then selects the most appropriate model variant—from a lightweight version for simple requests to a full LLM-scale model for complex, high-value interactions. This dynamic alignment ensures that computing resources are used only where they add the most value, dramatically improving efficiency without sacrificing quality.

Key Innovations Behind the System

Three transformative innovations underpin the Adaptive Ranking Model’s success:

1. Inference-Efficient Model Scaling

Traditional models are designed without much regard for serving constraints. Meta shifted to a request-centric architecture, where the model structure adapts to the specific needs of each inference call. This allows a LLM-scale model—with billions of parameters—to run in sub-second time, enabling a richer comprehension of user intent without degrading the experience.

2. Model/System Co-Design

Meta’s hardware-aware approach ensures that model architectures are aligned with the capabilities and limitations of the underlying silicon and hardware systems. By co-designing the model with the hardware in mind—whether it’s CPUs, GPUs, or custom accelerators—the system achieves significantly higher utilization in heterogeneous environments, reducing waste and boosting throughput.

3. Reimagined Serving Infrastructure

To support models with up to a trillion parameters (O(1T)), Meta redesigned the serving stack. This involved leveraging multi-card architectures and hardware-specific optimizations, such as specialized memory management and parallel processing techniques. The result: serving LLM-scale runtime RecSys models with unprecedented efficiency, even at Meta’s massive traffic volumes.

Business Impact and Results

The integration of LLM-scale intelligence into Meta’s ads stack has delivered measurable improvements. Since launching on Instagram in Q4 2025, the Adaptive Ranking Model has produced:

- A +3% increase in ad conversions

- A +5% increase in ad click-through rate (CTR) for targeted users

These gains come without sacrificing system-wide computational efficiency, meaning businesses of all sizes benefit from superior performance. The model effectively bends the inference scaling curve, proving that it is possible to serve LLM-scale models in real-time ad recommendation environments.

Conclusion

Meta’s Adaptive Ranking Model represents a paradigm shift in how AI-driven advertising systems are designed and deployed. By dynamically routing requests, co-designing with hardware, and rethinking the entire inference stack, Meta has overcome the inference trilemma. The result is a smarter, faster, and more efficient ad serving platform that delivers better outcomes for users and advertisers alike. As AI continues to scale, this approach sets a new standard for balancing complexity with real-world performance.